Tricorder Tech: A Highly Capable Language Model Locally On Your Phone

Editor’s note: you can never seem to have too much access to data when you are on an Away Team out in the middle of nowhere. The further afield you get i.e. off world, the more pressing this can seem. Communication with home (Earth) may be sporadic, asynchronous, at a slow data rate, and time consuming. Having a personal data device i.e. a tricorder at hand with the ability to assist in handling data will be a primary expeditionary tool as we explore other worlds.

This paper described an effort to take a consumer product (iPhone 14) and use it to run this application. The iPhone 14 has already been surpassed by the iPhone 15 and the iPhone 16 due in late 2024 will begin to incorporate Apple’s AI strategy. At this pace we may all have our Tricorders before we’re ready to leave Earth. Maybe we’ll put them in droids and send them out first. Oh yes: when you watch new Star Trek shows such as “Picard” the producers have been known to use current consumer phones to run screens on the “props” i.e. the phones they use for filming – as tricorders.

We introduce phi-3-mini, a 3.8 billion parameter language model trained on 3.3 trillion tokens, whose overall performance, as measured by both academic benchmarks and internal testing, rivals that of models such as Mixtral 8x7B and GPT-3.5 (e.g., phi-3-mini achieves 69% on MMLU and 8.38 on MT-bench), despite being small enough to be deployed on a phone.

The innovation lies entirely in our dataset for training, a scaled-up version of the one used for phi-2, composed of heavily filtered web data and synthetic data. The model is also further aligned for robustness, safety, and chat format.

We also provide some initial parameter-scaling results with a 7B and 14B models trained for 4.8T tokens, called phi-3-small and phi-3-medium, both significantly more capable than phi-3-mini (e.g., respectively 75% and 78% on MMLU, and 8.7 and 8.9 on MT-bench).

Introduction

The striking progress of AI in the last few years can be largely attributed to major efforts throughout the world towards scaling-up to ever-larger models and datasets. Large Language Models (LLMs) have steadily increased in size from a mere billion parameters just five years ago (GPT-2 had 1.5 billion parameters [RWC+ 19]) to trillion parameters today.

The impetus for this effort originates in the seemingly predictable improvement one obtains by training large models, the so-called scaling laws [KMH+ 20, HBM+ 22, MRB+ 23]. However these laws assume a “fixed” data source. This assumption is now significantly disrupted by the existence of frontier LLMs themselves, which allow us to interact with data in novel ways. In our previous works on the phi models [GZA+ 23, LBE+ 23, JBA+ 23] it was shown that a combination of LLM-based filtering of publicly available web data, and LLM-created synthetic data, enable performance in smaller language models that were typically seen only in much larger models. For example our previous model trained on this data recipe, phi-2 (2.7B parameters), matched the performance of models 25 times larger trained on regular data.

In this report we present a new model, phi-3-mini (3.8B parameters), trained for 3.3T tokens on larger and more advanced versions of the datasets used in phi-2. With its small size, phi-3-mini can easily be inferenced locally on a modern phone (see Figure 2), yet it achieves a quality that seems on-par with models such as Mixtral 8x7B [JSR+ 24] and GPT-3.5.

—

Highly capable language model running locally on a cell-phone. Thanks to its small size, phi3-mini can be quantized to 4-bits so that it only occupies ≈ 1.8GB of memory. We tested the quantized

model by deploying phi-3-mini on iPhone 14 with A16 Bionic chip running natively on-device and fully

offline achieving more than 12 tokens per second.

4-bit quantized phi-3-mini running natively on an iPhone with A16 Bionic chip, generating over 12

tokens per second. — cs.CL

Marah Abdin, Sam Ade Jacobs, Ammar Ahmad Awan, Jyoti Aneja, Ahmed Awadallah, Hany Awadalla, Nguyen Bach, Amit Bahree, Arash Bakhtiari, Harkirat Behl, Alon Benhaim, Misha Bilenko, Johan Bjorck, Sébastien Bubeck, Martin Cai, Caio César Teodoro Mendes, Weizhu Chen, Vishrav Chaudhary, Parul Chopra, Allie Del Giorno, Gustavo de Rosa, Matthew Dixon, Ronen Eldan, Dan Iter, Amit Garg, Abhishek Goswami, Suriya Gunasekar, Emman Haider, Junheng Hao, Russell J. Hewett, Jamie Huynh, Mojan Javaheripi, Xin Jin, Piero Kauffmann, Nikos Karampatziakis, Dongwoo Kim, Mahoud Khademi, Lev Kurilenko, James R. Lee, Yin Tat Lee, Yuanzhi Li, Chen Liang, Weishung Liu, Eric Lin, Zeqi Lin, Piyush Madan, Arindam Mitra, Hardik Modi, Anh Nguyen, Brandon Norick, Barun Patra, Daniel Perez-Becker, Thomas Portet, Reid Pryzant, Heyang Qin, Marko Radmilac, Corby Rosset, Sambudha Roy, Olatunji Ruwase, Olli Saarikivi, Amin Saied, Adil Salim, Michael Santacroce, Shital Shah, Ning Shang, Hiteshi Sharma, Xia Song, Masahiro Tanaka, Xin Wang, Rachel Ward, Guanhua Wang, Philipp Witte, Michael Wyatt, Can Xu, Jiahang Xu, Sonali Yadav, Fan Yang, Ziyi Yang, Donghan Yu, Chengruidong Zhang, Cyril Zhang, Jianwen Zhang, Li Lyna Zhang, Yi Zhang, Yue Zhang, Yunan Zhang, Xiren Zhou

Comments: 12 pages

Subjects: Computation and Language (cs.CL); Artificial Intelligence (cs.AI)

Cite as: arXiv:2404.14219 [cs.CL] (or arXiv:2404.14219v2 [cs.CL] for this version)

https://doi.org/10.48550/arXiv.2404.14219

Focus to learn more

Submission history

From: Sebastien Bubeck

[v1] Mon, 22 Apr 2024 14:32:33 UTC (3,072 KB)

[v2] Tue, 23 Apr 2024 14:49:38 UTC (3,072 KB)

https://arxiv.org/abs/2404.14219

“Pocket-Sized AI Models Could Unlock a New Era of Computing“, by Wired takes a look at this research as well.

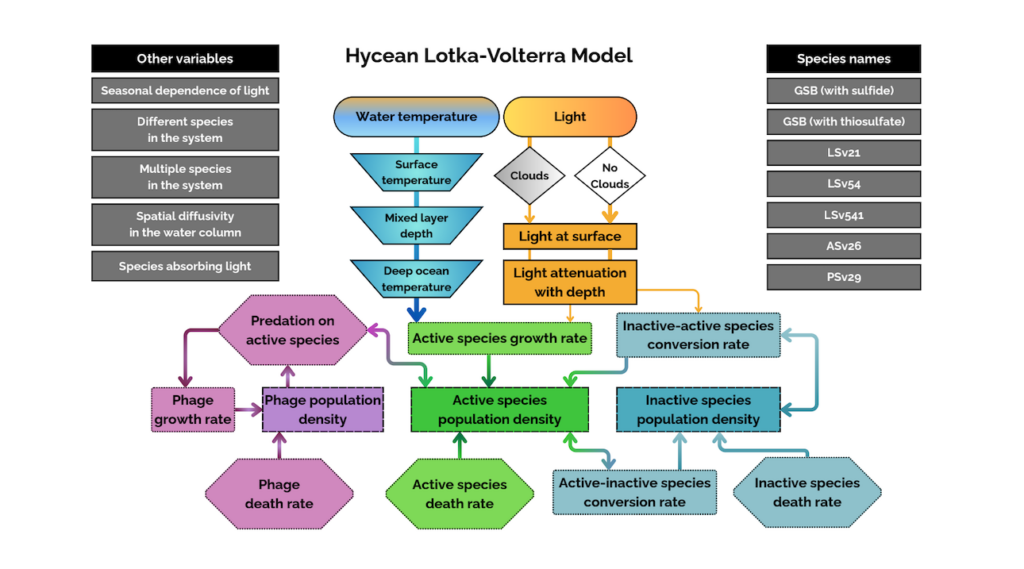

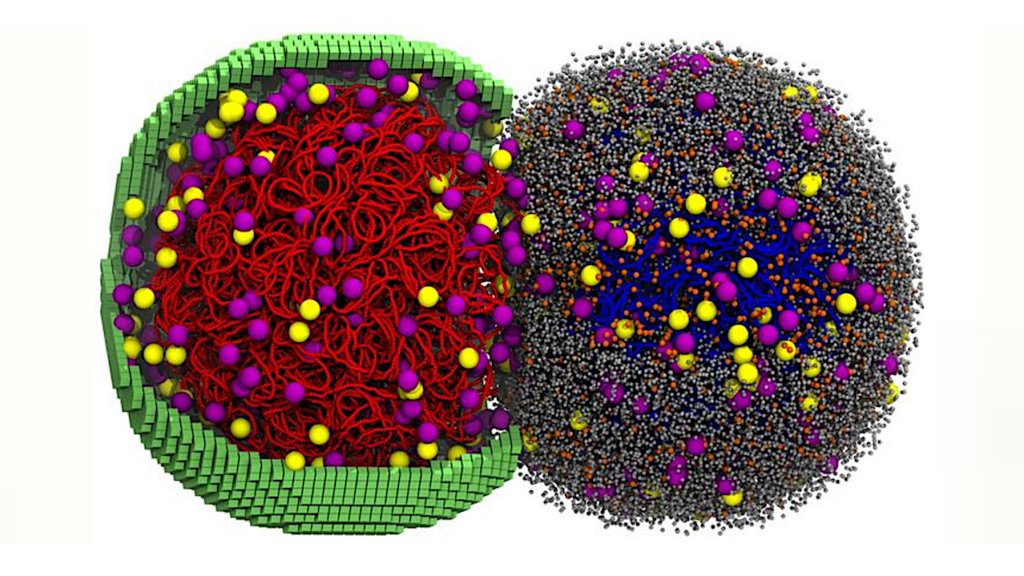

Astrobiology